Deploy Your Own AI Chat Bot using OpenAI and Vercel

A brief guide to deploying your own AI chat bot using OpenAI and Vercel.

I'm not a fan of cliches, but you really have to be living under a rock to not be deluged with AI chatbots in the past few weeks. An API and some credits was all it took to unleash the creativity of pros and pseudo-coders alike. OpenAI has led this revolution thus far, but other startups are also rushing to market with models, infrastructure and toolsets to capture the generative AI boom. Like most others, I've been fascinated with the recent advancements, and have been testing various projects to improve my understanding of this space. In this guide, I'll walk through the deployment of an AI chatbot using a pre-configured template from Vercel.

What is Vercel?

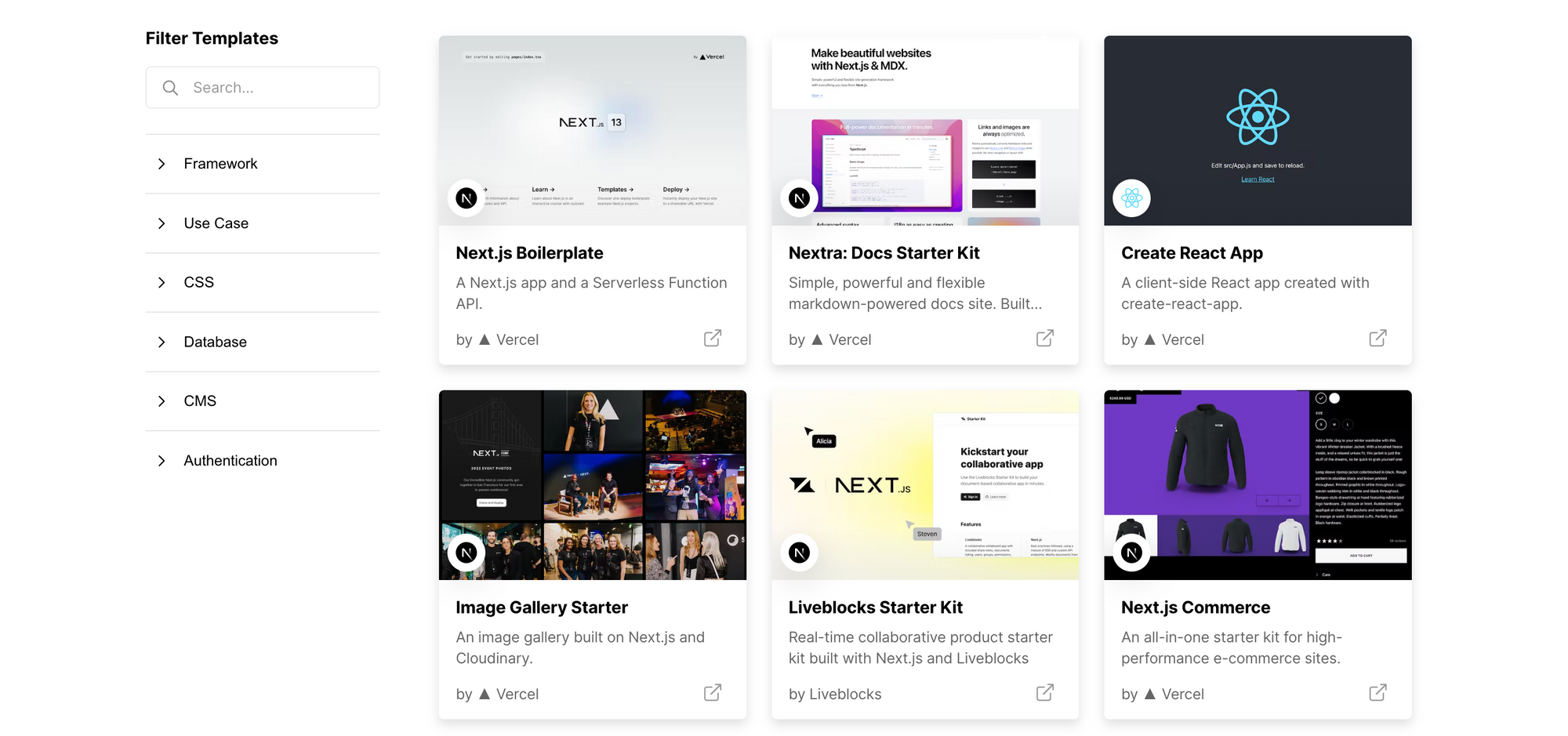

Vercel is a cloud platform for deploying static sites, serverless functions, and production-ready web apps quickly, and with minimal effort. Vercel aims to simplify frontend development, offering a collaborative experience for teams with automatic previews, end-to-end testing on localhost, and easy integration with backends. While it is the native platform for Next.js, Vercel works equally well with 30+ frameworks, and offers templates to jumpstart your app development process.

Deploy AI Chatbot using One-Click Vercel Template

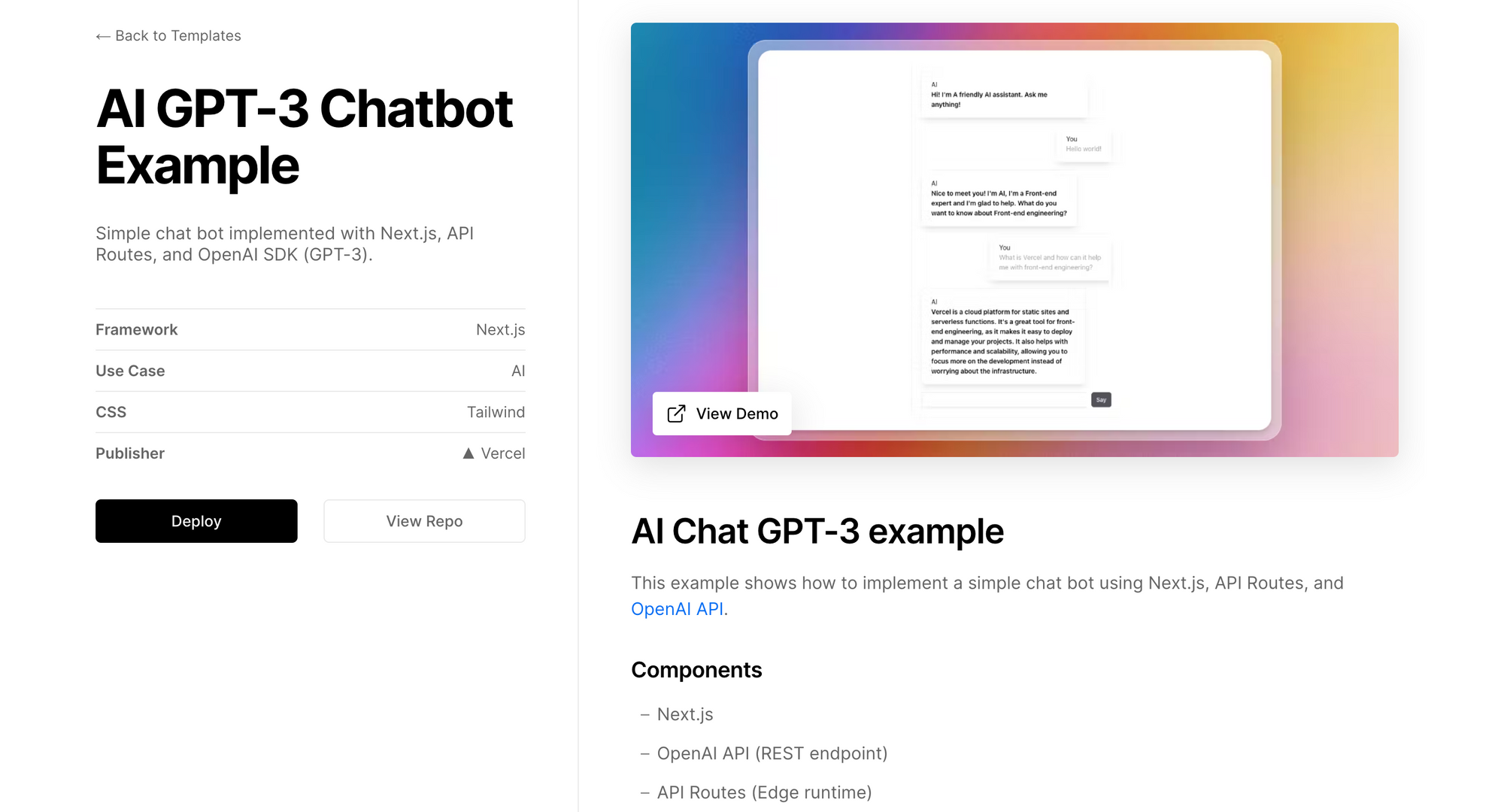

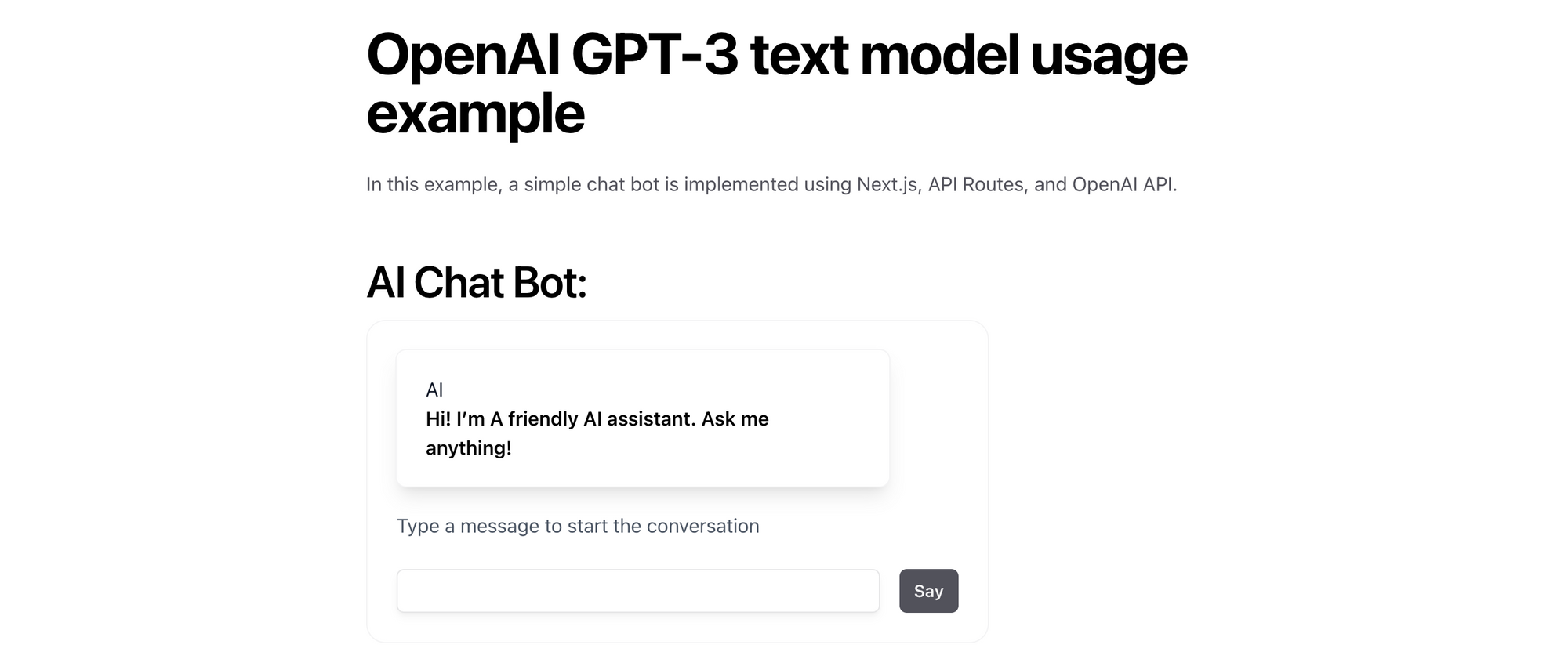

This is a really simple chatbot implementation using Next.js, API Routes, and OpenAI (GPT-3). If you don't already have an OpenAI account, create one, and then create a new secret API key. OpenAI offers $18 in credits for eligible new users; if you don't see the credits, you may need to add a payment method to continue. Make sure you set a usage threshold that you are comfortable with. Launch the AI GPT-3 Chatbot starter template from Vercel.

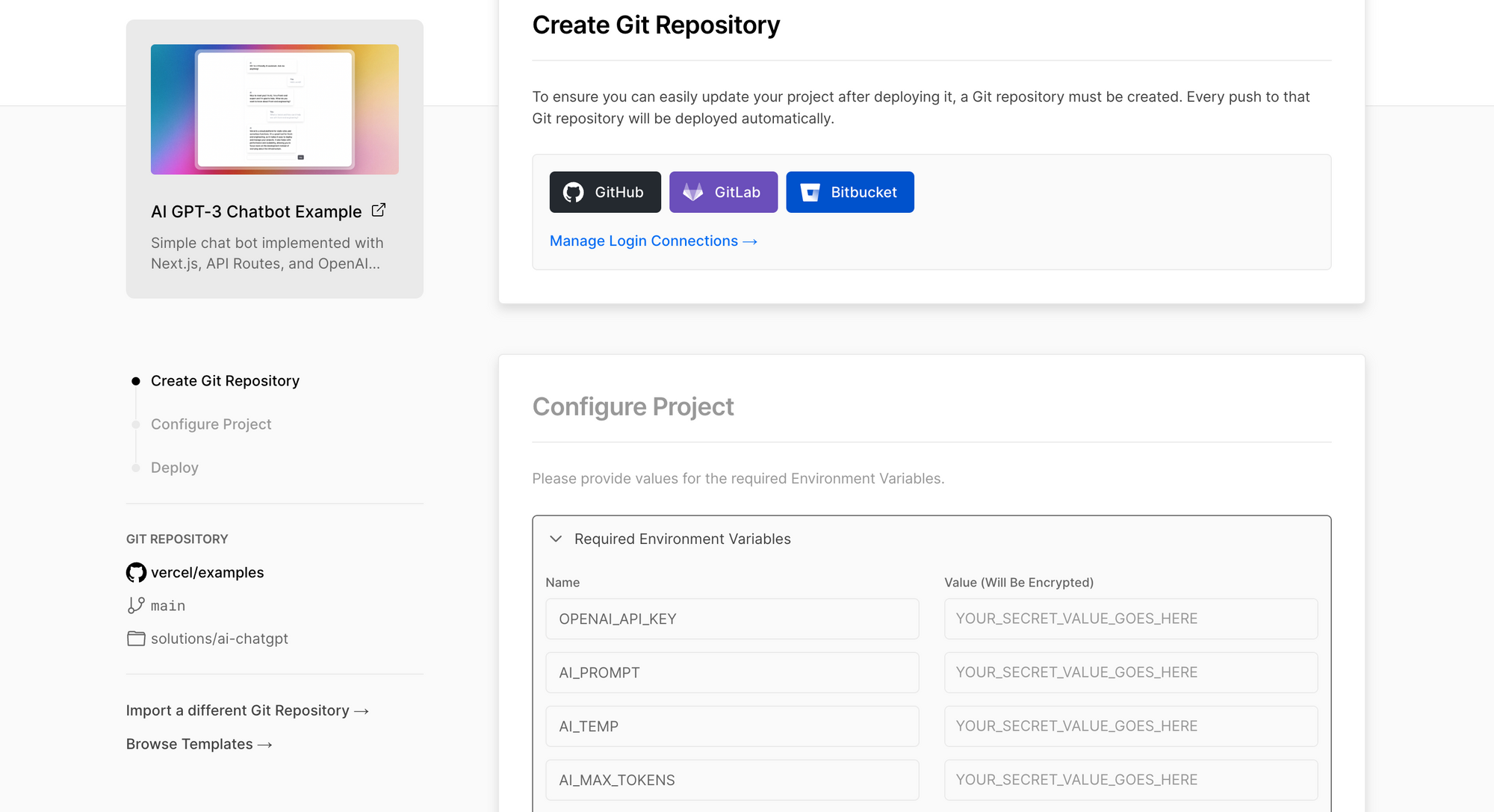

Click Deploy to start the deployment on Vercel. Connect to your Git repository of choice (GitHub/GitLab/Bitbucket), and click Create to clone the template in your own Git repository.

Configure the following required environment variables, and click Deploy.

OPENAI_API_KEY: your OpenAI API keyAI_PROMPT: prompt definition for the bot's behaviour, knowledge and response style; default emptyAI_TEMP: temperature controls the randomness in output; default0.7AI_MAX_TOKENS: size of the response; default100

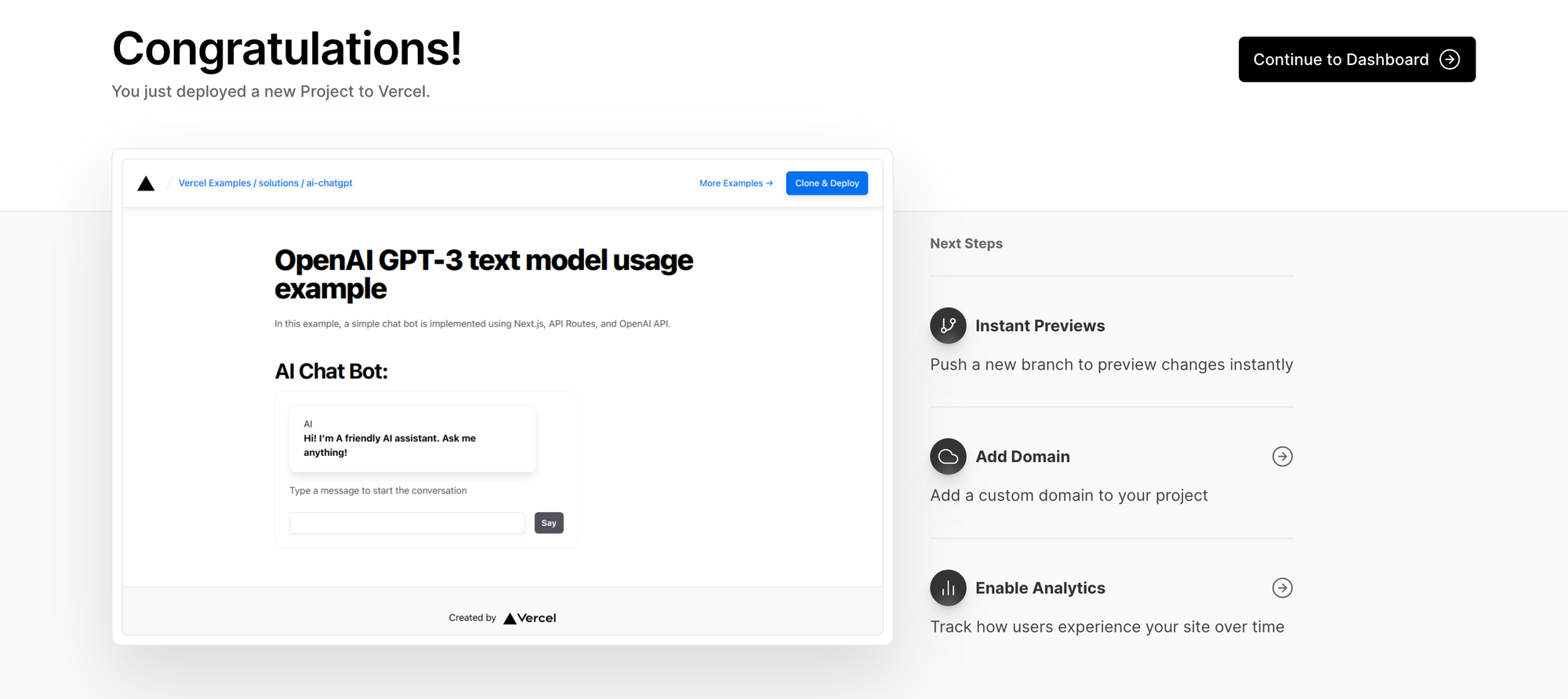

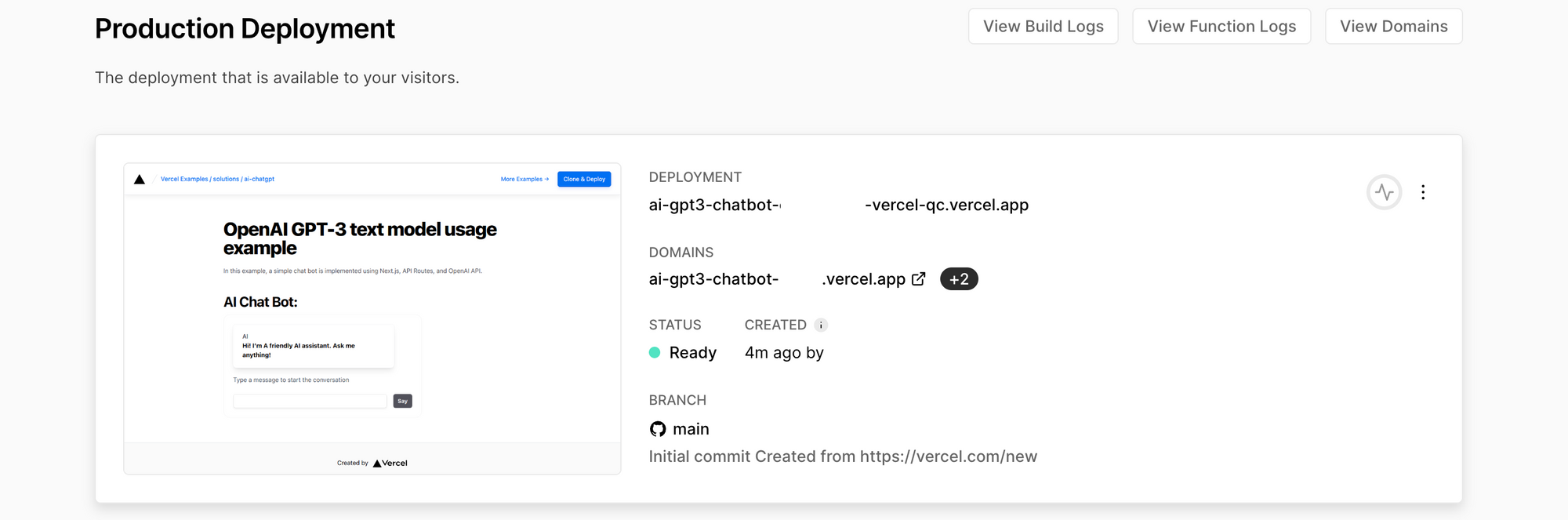

The Vercel deployment starts instantly, builds the image, runs a few checks, and makes the chatbot available publicly on a temporary domain. If you want to host the chatbot on a custom domain, see this guide.

Click on the ai-gpt3-chatbot-***.vercel.app URL to launch your chatbot. The template uses the text-davinci-003 GPT-3 model from OpenAI - it is the most capable model till date, with higher quality, longer output and better instruction-following, but with training data only up to Jun 2021. So don't expect any real-time results from it! Also, davinci is more expensive than models like ada, babbage and curie, costing you $0.02 per 1000 tokens (roughly 750 words).

This walk-through is meant for getting started only; if you intend to make your chatbot available to a wider audience, you'll want to test effectiveness of different models, configure embeddings and semantic searches, both to improve the chatbot relevance for your audience, and to keep costs low. More on that later though.